OpenACC $ Reedbush-H

Transcript of OpenACC $ Reedbush-H

OpenACC���$Reedbush-H�����������#�% ��� � �

���#�"�! #�&('&�' 1

20191�8�&�'10:25 – 12:10

2019/1/8

92�,i��;�:+(j

1. 9�25�o LKSgR2. 10�2�

l ����%F�!$/i��j

3. 10�9�oRWPg &<�l fOKg�"BURVYfOb]�4

4. 10�16�l A�3YfOb\gO�#F�*li>�^_cBdhYJgfhcgOj

5. 10�23�l A�3YfOb\gO�#F�*2

iM`TQaXfTN�j

6. 10�30�l 4�-ZNVd-F���

2019/1/8 RWPgYfOb\gOilji�j

7. 11�6�l HD�#F���

8. 11�20�l 4�-4�-F���ilj

9. 11�27�l 4�k4�-F���imj

10. 12�4�l pq6#ilj

l PgURV8@'5

11. 12�11�1=l pq6#imj

12. 12�11�2=l pq6#injB?� :�

13. 1�8�l RB-HC7EB).0��

e[hVCIGPgURV8@i1�o

2019�2�4�i�j24� ��

2

GPU������������������GPU���� �����

2019/1/8 ������������! �� 3

GPU;�/.��$!: GPGPU• GPU: Graphic Processing Unit, �����5END=><

• '&3��(3������32• 3������324�)8�!;��$!4��

• ��5G?CKO�"P4 ,0'&4$!-8.74(��5�!1���� ��$!4

• 10� �1�6�)*(HPC16�+�:90)8• �%16���#(Deep Learning)5��4

2019/1/8 BFAMHL@JIM@OQPO�P 4

5

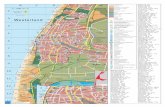

Green 500 Ranking (November, 2018)TOP 500

Rank System Cores HPL Rmax(Pflop/s)

Power(MW) GFLOPS/W

1 374 Shoubu system B, Japan 953,280 1,063 60 17.604

2 373 DGX SaturnV Volta, USA 22,440 1,070 97 15.113

3 1 Summit, USA 2,397,824 143,500 9,783 14.668

4 7 ABCI, Japan 391,680 19,880 1,649 14.423

5 22 TSUBAME 3.0, Japan 135,828 8,125 792 13.704

6 2 Sierra, USA 1,572,480 94,640 7,438 12.723

7 444 AIST AI Cloud, Japan 23,400 961 76 12.681

8 409 MareNostrum P9 CTE, Spain 19,440 1,018 86 11.865

9 38 Advanced Computing System (PreE), China 163,840 4,325 380 11.382

10 20 Taiwania 2, Taiwan 170,352 900 798 11.285

- - Reedbush-L, U.Tokyo, Japan 16,640 806 79 10.167

- - Reedbush-H, U.Tokyo, Japan 17,760 802 94 8.576

http://www.top500.org/

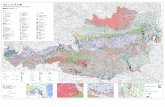

Reedbush-H��������

NVIDIA Pascal

NVIDIA Pascal

NVLinK20 GB/s

Intel Xeon E5-2695 v4 (Broadwell-

EP)

NVLinK20 GB/s

QPI76.8GB/s

76.8GB/s

IB FDRHCA

G3

x16

15.7 GB/s 15.7 GB/s

DDR4���128G

B

EDR switch

EDR

76.8GB/s 76.8GB/s

Intel Xeon E5-2695 v4 (Broadwell-

EP)QPIDDR4DDR4DDR4

DDR4DDR4DDR4DDR4

���128G

B

PCIe swG

3 x16

PCIe sw

G3

x16

G3

x16

G3 x16

G3 x16

IB FDRHCA

2019/1/8 ������� �������� 6

��GPU!2(-3$�2 8

P100 BDW KNL

��� �5GHz6 1.480 2.10 1.40

!��5��"0#%�6 3,584 18 (18) 68 (272)

�����5GFLOPS6 5,304 604.8 3,046.4

����5GB6 16 128 16

+,/&2%�5GB/sec., Stream Triad6 534 65.5 490

�� Reedbush-H�GPU

Reedbush-U/H�CPU

Oakforest-PACS�CPU (Intel Xeon

Phi)

"'!2)1 .*2 5765�6 7

• ������4

2019/1/8

GPU=@9?>A91�'!+$G• CPUF �(/:75$)-&��

• Reedbush-H 0 CPU : 2.10 GHz 18:7• �(/:7…� ��#<8=?8A��#Out-of-Order

• �1/6.3.(4

• �0��'��

• GPUF �*/:75,)*6��• Reedbush-H 0 GPUF 1.48 GHz 3,584 :7• �*/:7... ����'�$, 2,1/$B• ����'�"

;<:A=@9?>A9CEDC�D 8

GPU0!+*1. ��=@9?>A9��0!+*2. �0:75��)�%!+*

2019/1/8

���NVIDIA Tesla P100

��� ��� ������� 9

• 56 SMs• 3584 CUDA

Cores• 16 GB HBM2

P100 whitepaper��

2019/1/8

���NVIDIA Tesla P100 � SM

��������� �� �� 102019/1/8

QNVIDIA�/GPU• ! 9IM;

• GeForce• 8L9GMB�)&��&

• Tesla• HPC�)&������%��#DEI%ECC3�'2*1$�&

• 4M5>6<FN��O1. TeslaQ��/HPC�)GPU%TSUBAME1.2-,2. FermiQ2���%TSUBAME2.0-,• ECCDEI%FMA��%L1 L2 5F=9G

3. KeplerQ��HPC.+�(��%TSUBAME2.5-,• 9F=@J��%Dynamic Parallelism%Hyper-Q

4. MaxwellQ8L9GMB�)/05. PascalQ��GPU%Reedbush-H.�"• HBM2%����% NVLink%���atomicAdd -,

6. VoltaQ���GPU• Tensor Core-,

112019/1/8 :?8LAK7HCL7NPON�O

���"��(�GPU&�• CPU#��&GPU78:•��+�� )%'0;23 >>/, •���0;23��#��• Warp ��&��•*!"'��$�Warp��•/,;03,-10

04/=5<.96=.>@?>�? 122019/1/8

CPU���GPU124

• � CPU�#�!%• �����,-'*124�,7+�"$�$��

*.)6/5(306(8;98�9 13

(

( (

(

), 10

OS�����% OS �����

<:� &��

;:���,7+&�%

=:� ��&��

(

/ 2 3

2019/1/8

��5�'(2,/AVGJ�>>=6�• AVGJ�

• CPU^ AVGJ�==6� (!"��AVGJ)• GPU^ AVGJ�>==6�*4~ (��~���AVGJ)

• ���/�.TDZA*.�-�#,34

• ��^!�=XH:AIA7GF,34QRTV7HX? �• CPU : V@AEYAEG;.��/OS%DMI896)�$(�#)• GPU : KZJ896>OZI)=AI01CW

• QRT6;BA,34�+��(AIZU),�.AVGJ5��

AL=XNW<SPX<[]\[�\

14

1core=1AVGJ.*&

QRTread� QRTread��

1core=NAVGJ.*&

2019/1/8

%��<G=>��.��• ;82��1 �

• 1 SM 2�1 64 CUDA core&56 SM - 3584 CUDA core• 1 CUDA core )!�<G=>7��

• <G=>$2��• ��SM�2<G=>$3��-*6

• ��13��<G=>@H=9�

• �06SM2<G=>$3��-*0(• ��+6,413GPU2��7��+6�"'5• atomic ��3

• CDF#�2��• L1 cache, shared memory, Instruction cache 0/3SM�-��• L2 cache, Device memory 0/3�<G=>-��

<?;IAH:EBI:JLKJ�K 15

�3P1002�

2019/1/8

Warp ��8��• "�-032DMFHA1�� = Warp 5�;• ,8Warp9!�<�(3+

• ��/>��932DMFH�3�.• GPE9#13''

DICOJNBLKOBRUSR�S 16

4 3 5 … 8 0DMFH 1 2 3 … 31 32

$ A

$ B 2 3 1 … 1 9

% % % … % %

4 3 5 … 8 0DMFH 1 2 3 … 31 32

$ A

$ B 2 3 1 … 1 9

& % T … − %

OKQ NGQ

2019/1/8

Volta��)=9��*�@1084��V DMFH*��-3����- �*��/?:�2,579�@=6'

��������Warp��• Divergent Branch

• Warp ����!���Warp����� OK�

'+%2,1#/-2#4654�5 17

::

if ( TRUE ) { ::

} else {::

}::

::

if ( ��'0(* ) { ::

} else {::

}::

��'0(*"� ����"� �$3'��32��%')

else ������&.2,

2019/1/8

72F8=2498

• �Warp�'8F:=���2=F8&�&2498�.'�BCE' �����

• �/172F8=2498(coalesced access)%*

8?7H@G5DAH5JLKJ�K 18

32�'BCE2498��0/.

BCE2498�1�$�,�

;>38BCE

8F:= 1 2 3 4 … 32…

8F:= 1 2 3 4 … 32

128>3<��$BCE2498�Warp�'2498�128>3<&�+"#/)1���//) '�!��-�����6I8$(32�'78<

2019/1/8

GPU�;[`Q^\aQ#�• CUDA (Compute Unified Device Architecture)

• NVIDIAGGPU�;0%#�3C-. HCUDA CC>ANVIDIA8K2Fortran HCUDA FortranC>APGI("�HNVIDIAG��()8K��=MA5L3

• OpenACCf�'�N$5A�N,6[`Q^\aQ#�3C-.CFortranG��G��9�IKMA5L3PGISaZO^ED�@8GSaZO^9��3cGPU9�EVbRWX?9dGPU�$-.BHE53• c!F�)E[`Q^]F75AHdOpenACCBJCUDABJ �G�*9�L<CJ4L92�+&FHCUDAG�91/

• _PTbSbY94L��HOpenACCB�:�9HL8F�

192019/1/8 UZSa[`Q^\aQcedc�d

OpenACC• !�

• �7B:5?=B9(PGI, Cray-,)&��. '*%(��4��'�"!�� (http://www.openacc.org/)

• 2011��.OpenACC 1.0��/��0OpenACC 2.5

• 7B:5?

• ��GPGI, Cray, PathScale

• PGI 0���2�'*%3

• ���GOmni (AICS), OpenARC (ORNL), OpenUH (U.Houston)

• ;@CGGCC 6.x

• $�� ($���G https://gcc.gnu.org/wiki/Offloading)

• �.01)#%

8:7B<A6?>B6DFED�E 20

RB-H+0PGI7B:5?4�%3

2019/1/8

OpenACC �OpenMP ��������

���������������� 21

OpenACC

���CPU

OpenMP

CPU

1����

CPU

int main() {

#pragma …for(i = 0;i < N;i++)

{

…

}

}

2019/1/8

OpenACC�OpenMP ��

'.#!�CPU��

MEMORY

��65

��65

��65

��65

��65

��65

��65

��65

OpenMP����1�$�#+

22

CPU(s)

• ��!��N�• N < 100 � (Xeon Phi��)

• �)*-

���������1�$�#+����

2019/1/8 "%!0&/ ,(0 2432�3

• ��1,N�+M��$��• N > 1000 +�• ��M(,/3ACF4&)*

• <28-79-2$ �"#>?B• <28-79-2�7F4��(��

,/3ACF4+� #�����

MEMORY(<28)

CPU(s)

MEMORY(79-2)

OpenACC%OpenMP '��OpenACC '�,F.6/5@

23

��'��(��,F.6/5@'��!

2019/1/8 2:1E;D0A=E0GIHG�H

OpenACC4OpenMP 8��• OpenMP4�,;8

• Fork-Join4%&��72)MQI���

• OpenMP76)3OpenACC7$<;8• JAE4DG>A4%&��

• JAE-DG>A!8DQB��• �" 8����

• OpenMP7$03OpenACC76%;8• ANCFID=�%/��65

• OpenMP8omp_get_thread_num()7��-<;8'�%

• .8�#�=1*<:( %• OpenMP4�:3OpenACC9��7�&+4'�%

• ��DQB#���65=���8�9��7��

242019/1/8 AH@PIO?LKP?RTSR�S

OpenACC;OpenMP >�$OSIXP:>�>�-• OpenMP

• �' shared• OpenACC

• MJW�_ firstprivate or private• (�_ shared

• TYKWV�>parallel/kernels��=�5�/87�*OpenACCLZRHW?!= "<O[NG��:#&6D

• �52#&4E<-3;C,D+��:�2A1• ��=�5�/D7@=#&0!FED()��)+�%>data���G�-9��:�2A1

• (�?OQHM=��4ED (shared��D -)• (��Gprivate=�.7B=? private ����.

MRLZTYKWUZK\^]\�] 252019/1/8

GPU%-�*'.�����

OpenACC with Unified Memory

�$�.%-�*'.�0210�1 26

�

OpenACC OpenMP

omp parallel do ���

OpenACC with �/����

�/#+�CUDA�

OpenACC ��/#+�)/".�

�/�&#/�(. ��

�,�!����

intrinsic ����SIMD�

�������SIMD�

2019/1/8

Reedbush-H�����OFP������

2019/1/8 ������ ��� ������ 27

�&��@1/2A1. 6:-0+ !��)2. ��&,4;1+���)

https://reedbush-www.cc.u-tokyo.ac.jp/3. �9?0��%2>3?�(��* �������+�*)�

4. �51=?4�%�2>3?�(��* �51=?4�+���)�$�����*"�)51=?4'51=?4#'$��

15/>7<.:8>.@BA@�A 282019/1/8

Reedbush)?0/AØ 4B;6=�+���.��",

$ ssh reedbush.cc.u-tokyo.ac.jp -l tYYxxx�-l�(7/9A%��'F��tYYxxx�(�����C�D

�tYYxxx�(������.�-,Ø �",�%��-,'$� yes .�-,Ø �'��&�-#�� �*#82@B5C829>B3D.�-,

Ø ��",%�?0/A $!,

281A:?0<;A0CEDC�D 292019/1/8

MIHAU\8#�81,3

• MIH��9)Altair�8MIHEGJT PBS Professional5��0=4+:2*

• ��)�!DR[L>&�1:2*• FVP8��a

qsub <FVPGBXQKO?@Y>• ��-��13FVP8���%a rbstat• ��FVP8�(a qdel <FVPID>• MIHAU\8��>"<a rbstat --rsc• MIHAU\8$���>"<a rbstat –rsc -x• �/;=4+<FVP�>"<a rbstat -b• '�8����>"<a rbstat –H• �7��5.<�_� 5.<�>"<a rbstat --limit

GND[QZCWS[C]`^]�^ 302019/1/8

OFP68 �a

pjsub => qsubpjstat => rbstat

#!/bin/bash#PBS -q h-lecture#PBS -Wgroup_list=gt06#PBS -l select=8:mpiprocs=36#PBS -l walltime=00:01:00cd $PBS_O_WORKDIR. /etc/profile.d/modules.sh

mpirun ./hello

JOB.)=82,A8>"��C6:&IJHDChello-pure.bash, C���Fortran����D

.5+A8@*<9A*CFDC�D 31

=0B.*>B8�Gu-lecture

��*>B8�Ggt27

MPI-;7%8E36 = 288 8@/.!���#�

��4B3

4B3���+& CIJH8@/. D

������GF�

(?A21'?)2=���� �C���$ ��D

2019/1/8

�����$(�

• �����$(�,

• h-lecture6• �10���• � (��2 (�(4GPU) ��

• ������)24��*��$(�,• h-lecture

• ����������$(����

�!�'"&�%#'�)+*)�* 322019/1/8

Reedbush'��.�• /home @13J79:F)�������L53M'�&@13J"�/��!+(��%��

• /home '��!@13J)��>N=�-� %�* 0�8GA(��,%�* 0�

=> L53M�) /lustre @13J79:F/�#$�"���

• CNF;2K4<I: /home/gt16/t16XXX• cd 6DM=%��%�*��

• Lustre;2K4<I: /lustre/gt16/t16XXX• cdw 6DM=%��%�*��

9?6MBL5HEM5OQPO�P 332019/1/8

OpenACC����

��� ��� ������� 342019/1/8

OpenACC ������• ������

• kernels, parallel• (��������

• data, enter data, exit data, update• %("�����

• loop• ���� ��������

• host_data, atomic, routine, declare

�!�'"&�$#'�)+*)�* 352019/1/8

��&������eparallel, kernels•IKP[]aQ�;�#7B2%�H��

• OpenMP@parallel���?��• 2�'@����eparallel, kernels

• parallele(=9F1<-.<) WSYI\• OpenMP?$-• *441F44C;A���#&�;7)����>=AZaNa�;��5C7+

• kernelse(=9F1<-.<) "�• *441F44C;ARTJO��#&�;7),<A0�85C7+

• 1-���`�H�/:-3<�!�?6E.>�?>G@;(=9FH�.1A D• =9F1<-.<kernels��

362019/1/8 OUM_V^L[X_Lbdcb�c

kernels/parallel���kernels

program main

!$acc kernelsdo i = 1, N

! loop body end do

!$acc end kernels

end program

parallel

program main

!$acc parallel num_gangs(N)!$acc loop gang

do i = 1, N! loop body

end do !$acc end parallel

end program

��������� �� �� 372019/1/8

kernels/parallel��kernels

program main

!$acc kernelsdo i = 1, N

! loop body end do

!$acc end kernels

end program

parallel

program main

!$acc parallel num_gangs(N)!$acc loop gang

do i = 1, N! loop body

end do !$acc end parallel

end program

(,'2-1&0/2&3543�4 38

.(*� )+%(�

• .(*-)+%($� �"��kernels

• ��������"��$� �"��parallel

��� )+%(��#������� ���N�!�������

2019/1/8

kernels/parallel�������kernels

• async• wait• device_type• if• default(none)• copy…

parallel

• async• wait• device_type• if• default(none)• copy…• num_gangs• num_workers• vector_length• reduction• private• firstprivate

��� ��� ������� 392019/1/8

kernels/parallel� ?��kernels parallel

• async• wait• device_type• if• default(none)• copy…• num_gangs• num_workers• vector_length• reduction• private• firstprivate

04/:59.86:.<>=<�= 40

parallel$(������$�+!%,��"+#)����*��'�,�)+�� �+�

�����&��+�

��23-0�&487;1,��

2;1� '��,��+

2019/1/8

kernels/parallel ��� ��Fortran C��

��������������� 41

subroutine copy(dst, src)real(4), dimension(:) :: dst, src

!$acc kernels copy(src,dst)do i = 1, N

dst(i) = src(i)end do

!$acc end kernels

end subroutine copy

void copy(float *dst, float *src) {int i;

#pragma acc kernels copy(src[0:N] ¥dst[0:N])

for(i = 0;i < N;i++){dst[i] = src[i];

}

}

2019/1/8

kernels/parallel ��$(�Fortran

���'!&�%#'�)+*)�* 42

subroutine copy(dst, src)real(4), dimension(:) :: dst, src

!$acc kernels copy(src,dst)do i = 1, N

dst(i) = src(i)end do

!$acc end kernels

end subroutine copy

("��) (����)

dst, src �dst, src ���������

dst, src ���� (���

dst_dev, src_dev

dst’_dev, src’_devdst’, src’

�dst’_dev, src’_dev ���� (���

�dst, src ��������

�����

����

2019/1/8

SULP�<�/S_Q?:.;

• W]NZY�@parallel/kernels��?�6�098�+OpenACCO^VLZA�$?�%>S_QK"�<&(7H

• �63&(5I>.4=E-H,"�<�3C2

• ��?�6�0H8B?&(1$JIH(*��), '@data���K�.;"�<�3C2

• "�&(Adefault(none)<��<2H• PMZ�A firstprivate =6;�JIH

• �� ?FG��!

• )�ASULP?��5IH (shared��H#.)• )��KP\RT]_M[?�/8D?A private K�7H

PVO^W]NZX^N`ba`�a 432019/1/8

"*!�������������Fortran C �

#�)$(�&%)�+-,+�, 44

subroutine copy(dst, src)real(4), dimension(:) :: dst, srcdo j = 1, M

!$acc kernels copy(src,dst)do i = 1, N

dst(i) = dst(i) + src(i)end do

!$acc end kernelsend do

end subroutine copy

void copy(float *dst, float *src) {int i, j;for(j = 0;j < M;j++){

#pragma acc kernels copy(src[0:N] ¥dst[0:N])

for(i = 0;i < N;i++){dst[i] = dst[i] + src[i];

}}

}Kernels �'*$����,

HtoD��=>�=>DtoH�������…

2019/1/8

data� Fortran C��

)+(1-0'/.1'2432�3 45

subroutine copy(dst, src)real(4), dimension(:) :: dst, src

!$acc data copy(src,dst)do j = 1, M

!$acc kernels present(src,dst)do i = 1, N

dst(i) = dst(i) + src(i)end do

!$acc end kernelsend do

!$acc end data end subroutine copy

void copy(float *dst, float *src) {int i, j;

#pragma acc data copy(src[0:N] ¥dst[0:N])

{for(j = 0;j < M;j++){

#pragam acc kernels present(src,dst)

for(i = 0;i < N;i++){dst[i] = dst[i] + src[i];

}}}

}

C!���data� !��"{}���(�!��"for���,0*& ���$!����#�����)

present: �����$��% �

2019/1/8

data������

2019/1/8 ��������������� 46

(���) ( ��)

dst, src dst_dev,src_dev

��

dst’_dev,src’_dev

��

(���) ( ��)

dst, src dst_dev,src_dev

��

dst’_dev,src’_dev

dst’, src’

��

��

��

dst’, src’

180����<180��� ��•���%+180)� )��• ���)���#��

• ��<Fortran'C&����#�(+•����A,��%+�

47

! ,, , ( 1 : $ 1 : # #

! ,,

Fortran�

C� 2 ,, , () 1 ) 1 #

fortran&*�!'�!,��

C&*�"' $,��

2019/1/8 /2.736-547-9;:9�:

&���E?G1GKB���• OpenACC 05<H>@9&��3"�

• gang, worker, vector43&�• gangOworker4��� )2��• workerOvector4�• vectorO<H>@3��,7���+(����

• loop ���• parallel/kernels�4GKB4�(3.(/��

• AFDK=4%�5'7 ��36-/*87

• #�(gang, worker, vector)4��• GKB����4��4��

48

!$acc kernels!$acc loop gang

do j = 1, n !$acc loop vector

do i = 1, ncc = 0

!$acc loop seqdo k = 1, n

cc = cc + a(i,k) * b(k,j)end doc(i,j) = cc

end doend do

!$acc end kernels

GPU04$!4�

2019/1/8 <A;JBI:FCJ:LNML�M

�����6/:�#>%.&-7• OpenMP�1��

• 3:-(#CPU 1��• ���2���(SIMD)��!

• CUDA� block � thread �2��• NVIDA GPU 2��

• 1 SMX ��CUDA core"�• �(#�SMX�9+>)"��

• OpenACC�3��• ���#&*8;>,����!��

49

• NVIDIA GPU� �

49

GPU

/0$)569

SMX

CUDA(#

2019/1/8 )1(=2<'84='?A@?�@

OpenACC ) Unified Memory• Unified Memory ).…

• ���,��-CPU)GPU-PQT6�%�0�&-PQT-1�,����

• Pascal GPU(.HYG9:7?MYF• LYAJ;UF �"3),N8=VY@RX$'!43• Kepler��0��3 \CJF9:7��+-(/*!��

• OpenACC ) Unified Memory• OpenACC,Unified Memory6� ����.+�• PGI>XI8S, managed <K@RX6��3")(��3

• pgfortran –acc –ta=tesla,managed• ��)EYD��� ��#4��52,Unified Memory6��

BI>XKW=SOX=Z][Z�[ 502019/1/8

Unified Memory5NQEGTFNQEG

• NQEG• FUD��5��<�.8;:

• L?SD325�$3FUD�!<��4�(:• � 6NOQ�#*�);0':/7&F>UJAIU�%*��-:

CHASJR@PMS@VXWV�W 51

• FNQEG– KUB��1� -:/7&�)'� *�3�46"+3:– CPU�5NOQ��<��,0':51&allocate, deallocate<�9�-=JQ16CPU�*��4"+3:

2019/1/8

OpenACC�������������

� �������������� 522019/1/8

J[aOfQ_e>OpenACC��-1. [dZIKaeN=CE\VbXTM+�>�2. \VbXTM+�>OpenACC�

1. �� #3<13>�%

2. (OpenACC>��=�G8:[dN`^>�5�2)3. parallel/kernels�!�*�

3. data�!�=CEUfS&(>�*�4. OpenACCLfXb>�*�

1 ~ 4 H"D'7*�/9F;A)6F@.

5. LfXb>CUDA�• RcTW,> ���4�0J[aOfQ_e;?.shared

memory B shuffle ��H$�=�2ECUDA>�4���=��

RYPe[dN`]eNgihg�h 532019/1/8

�1OpenMP�+8-'7:JO>T@MS2OpenACC� $1. !$omp parallel 9!$acc kernels1���1�*�(2. Unified Memory 9�'%/6&(,GPU�.��3. ?SI;N2LECTA9�0)5%OpenACC<THP2�"�

4. FTD���9�'- !2�"

5. <THP2CUDA�• BQEG#2����)�':JO>[email protected]%shared

memory 4 shuffle �9��1�(7CUDA2�)��1��

BI?SJR=NKS=UWVU�V 542019/1/8

�&�����������

���% $�#"%�')('�( 55

main

sub1

sub3

sub2

subB

subA

int main(){double A[N];sub1(A);sub2(A);sub3(A);

}

sub2(double A){subA(A);subB(A);

}

subA(double A){

for( i = 0 ~ N ) {…

}}

����� �OpenACC�����

!�� ����

2019/1/8

-8,��#%�����

+0*726)547):<;:�; 56

main

sub1

sub3

sub2

subB

subA

int main(){double A[N];sub1(A);sub2(A);sub3(A);

}

sub2(double A){subA(A);subB(A);

}

subA(double A){#pragma acc …

for( i = 0 ~ N ) {…

}}

subA

� ���"������ '�$&%#���%9� ����!�����9

3+. -/(+

data����A'*18

2019/1/8

�'������� ���

���&!%�$#&�(*)(�) 57

main

sub1

sub3

sub2

subB

subA

int main(){double A[N];sub1(A);

#pragma acc data{

sub2(A);}sub3(A);

}

sub2(double A){subA(A);subB(A);

}

subA(double A){#pragma acc …

for( i = 0 ~ N ) {…

}}

subA

����'����������

"�� ����

data������A�� ' sub2

subB

2019/1/8

>K= ��+35�����

<B;JDI:GFJ:LNML�M 58

main

sub1

sub3

sub2

subB

subA

int main(){double A[N];

#pragma acc data{

sub1(A);sub2(A);sub3(A);

}}

sub2(double A){subA(A);subB(A);

}

subA(double A){#pragma acc …

for( i = 0 ~ N ) {…

}}

subA

$$/)�(4�3 2#��,9K@H,���7�05��>K=,����"��+��%#*6.��,)�!*4'&1���/)25��-*�

E<? >A8<

sub2

subB

main

sub1

sub3

data ��)��A7;CK

2019/1/8

PGIRh]NdAHKbXViTB�$��• Rh]NdbXViTB�$COpenACC=C�F<)!

• OpenMP >',*• ���A��6K8F*����=/K^gPdaG��4L@,3>.+K

• ��6D/ei^. �+K8F*?Bei^A?B� (gang, worker, vector).�J�1IL8-5K8F

• WiQXZY\NUB�%�*�9�2KD/UfX[�.��A�EI7*UfX[.,0;�9�.:8-�K8F

• �#>5<C*IntelRh]NdB�(f_iZM"@.IBSIMDA&,

• bXViTM"<*^gPdaM(���6K

• Rh]NdbXViT���• Rh]NdO^SchA -Minfo=accel M;1K

U]Rh^gPd`hPjlkj�k 592019/1/8

�����*&

• PGI�)!�%�����'#* • pgfortran -Minfo=accel

• ��� PGI_ACC_TIME• export PGI_ACC_TIME=1 ����%*�����������

• NVIDIA Visual Profiler• cuda-gdb

�!�)"(�%$)�+-,+�, 602019/1/8

PGI"6,�2���0*'7%��• "6,�2�.$16���

-Minfo=accel ����

&,"6.5!2/6!8:98�9 61

pgfortran -O3 -acc -Minfo=accel -ta=tesla,cc60 -Mpreprocess acc_compute.f90 -o acc_computeacc_kernels:

14, Generating implicit copyin(a(:,:))Generating implicit copyout(b(:,:))

15, Loop is parallelizable16, Loop is parallelizable

Accelerator kernel generatedGenerating Tesla code15, !$acc loop gang, vector(4) ! blockidx%y threadidx%y16, !$acc loop gang, vector(32) ! blockidx%x threadidx%x

….

"6,�20*'7%(fortran)

(7&"7+#-37)6�

��a�copyin, b�copyout��������

15, 16��;�37.�(32x4)�&4*+�-5* ��������

8. subroutine acc_kernels()9. double precision :: A(N,N), B(N,N)10. double precision :: alpha = 1.011. integer :: i, j12. A(:,:) = 1.013. B(:,:) = 0.014. !$acc kernels 15. do j = 1, N16. do i = 1, N17. B(i,j) = alpha * A(i,j)18. end do19. end do20. !$acc end kernels 21. end subroutine acc_kernels

2019/1/8

PGI_ACC_TIME� !OpenACC ����• OpenACC_samples #��

• $ qsub acc_compute.sh

• ���"!������!• acc_compute.sh.eXXXXX (��$4:��)

• acc_compute.sh.oXXXXX (����)

• $ less acc_compute.sh.eXXXXX

)0'817&428&;=<;�< 62

Accelerator Kernel Timing data/lustre/pz0108/z30108/OpenACC_samples/C/acc_compute.c

acc_kernels NVIDIA devicenum=0time(us): 149,10150: compute region reached 1 time

51: kernel launched 1 timegrid: [1] block: [1]device time(us): total=140,552 max=140,552 min=140,552 avg=140,552

elapsed time(us): total=140,611 max=140,611 min=140,611 avg=140,61150: data region reached 2 times

50: data copyin transfers: 2device time(us): total=3,742 max=3,052 min=690 avg=1,871

56: data copyout transfers: 1device time(us): total=4,807 max=4,807 min=4,807 avg=4,807

PGI_ACC_TIME � !��3,*:(

40. void acc_kernels(double *A, double *B){

41. double alpha = 1.0;

42. int i,j;

/ * A � B � � */

50. #pragma acc kernels

51. for(j = 0;j < N;j++){

52. for(i = 0;i < N;i++){

53. B[i+j*N] = alpha * A[i+j*N];

54. }

55. }

56. }

←����)6,.�

←-:+����9��

↓%:/5���

2019/1/8

��Vmodule@GPC4�'�• �&2@PD=LTMPI��21;�8�(9.54@GPC• DB7����21�"2$ )���3��+:9

• AKE� �36@PD=N�0,module;load-9*0

• ����2IAJQN4�#;!�Vmodule avail• ���4IAJQN;�%Vmodule list• IAJQN4load: module load IAJQN• IAJQN4unload: module unload IAJQN• IAJQN4�8�(Vmodule switch �IAJQN�IAJQN

• IAJQN;�/>M<: module purge

2019/1/8 BD@PFO?LHP?RUSR�S 63

:J@7G�/��: module:DJ?• =A8H>+0MIntel:J@7G+Intel MPI

• cf. module listCurrently Loaded Modulefiles:1) intel/18.1.163 2) intel-mpi/2018.1.163 3) pbsutils

• PGI:J@7G6�#��OKOpenACC4CUDA FortranL• module switch intel pgi/17.5

• CUDA����6�#��• module load cuda• ��C:J@7G3��

• MPI6�#��K:J@7G.��%*loadM:J@7G.!)(3/6�1L• module load mvapich2/gdr/2.3a/{gnu,pgi}• module load openmpi/gdr/2.1.2/{gnu,intel,pgi}

64

• ;FB��.3�&module6load• ���2�5'*3�"$M �.�

• ���PATH4LD_LIBRARY_PATH-,6��

2019/1/8 <@:JCI9GEJ9KNLK�L

�������� ������-���OpenACC�

��������������� 652019/1/8

��-���!/?6=6>-;8(OpenACC�)!� • C����%#Fortran���!4)*=�

Mat-Mat-acc.tar.gz•0:51,<624)*=mat-mat-acc.bash�!+9@�( h-lecture �& h-lecture6-=@6�( gt16 ����qsub �������• h-lecture : ����!+9@• h-lecture6: ����!+9@• Reedbush-H�"C+9@�" “h-”��$'

2019/1/8 13.?6>-;7?-ADBA�B 66

��-����*#(#)�'&���• ����$*!�����

$ cdw$ cp /lustre/gt16/z30105/Mat-Mat-acc.tar.gz ./$ tar xvfz Mat-Mat-acc.tar.gz$ cd Mat-Mat-acc

• ����������$ cd C : C� ����$ cd F : .1232/0� ����

• �����$ module switch intel pgi/18.7$ make$ qsub mat-mat-acc.bash

• ���������������$ cat mat-mat-acc.bash.oXXXXX

2019/1/8 "�*#)�'%*�+-,+�, 67

��-��������OpenMP�������• ��������� ��

2019/1/8 ����������������� 68

#pragma omp parallel for private (j, k)for(i=0; i<n; i++) {for(j=0; j<n; j++) {for(k=0; k<n; k++) {C[i][j] += A[i][k] * B[k][j];

}}

}

��-��� ��OpenACC�• ���GPU���

2019/1/8 �� �������������� 69

#pragma acc kernels copyin(A[0:N][0:N], B[0:N][0:N]) copyout(C[0:N][0:N])#pragma acc loop independent gang

for(i=0; i<n; i++) {#pragma acc loop independent vector

for(j=0; j<n; j++) {double dtmp = 0.0;

#pragma acc loop seqfor(k=0; k<n; k++) {dtmp += A[i][k] * B[k][j];

}C[i][j] = dtmp;

}}

��-��������OpenMP�����Fortran�• ��������� ��

2019/1/8 ����������������� 70

!$omp parallel do private (j, k)do i=1, n

do j=1, ndo k=1, n

C(i, j) = C(i, j) + A(i, k) * B(k, j)enddo

enddoenddo

��-��������OpenACC��Fortran���• GPU����

2019/1/8 ����������������� 71

!$acc kernels copyin(A,B) copyout(C)!$acc loop independent gangdo i=1, n!$acc loop independent vector

do j=1, ndtmp = 0.0d0

!$acc loop seqdo k=1, n

dtmp = dtmp + A(i, k) * B(k, j)enddoC(i,j) = dtmp

enddoenddo!$acc end kernels

��-����)4.2.3'10���

•���#������&!��

N = 8192Mat-Mat time = 8.184022 [sec.]134348.567798 [MFLOPS]OK!

��� ,5+������"&��%8���� � mat-mat-acc.bash.e* ��%MyMatMat NVIDIA devicenum=0

time(us): 6,864,586

�"$160GFLOPS

*-(4.3'1/4'6976�7 722019/1/8

Reedbush�������

2019/1/8 ������ �������� 73

ChainerMN• Chainer

• Preferred Networks %�����'��&2;B<=3/0@B)!�#!6>B:@B)

• Open Source Software• Python• GPU�� ���CUDA$cuDNN(��

• ChainerMN• Chainer!8=.4B1�• MPI (Message Passing Interface)• NCCL (NVIDIA Collective Communications Library)! �

• )<,-�!GPU� ��&����(���• ����!��"����! Allreduce��

2019/1/8 74,5+A7?*<9A*CEDC�D

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0 2000 4000 6000 8000 10000

64 GPU(H)128 GPU(H)240 GPU(H)

Reedbush-H��ImageNet�

• ResNet-50• 100������• 64, 128, 240 GPU (RB-H)• ChainerMN 1.0.0

• OpenMPI 2.1.1, NCCLv2• Chainer 3.1.0 • python 3.6.1, CUDA 8, cuDNN7,

cupy 2.1.0

2019/1/8 75

Elapsed time (sec)

Accu

racy

���$�#�! $�&('&�'

# GPUs

100 epoch����

��

32 6������ (72%)

64 3��58�20� 72.0%

128 1��59�02� 72.2%

240 1��7�24� 71.7%

���%!�"��

Seq2seq����

2019/1/8 76

0

0.05

0.1

0.15

0.2

0.25

0 5 10 15 20 25

32GPU

64GPU

Elapsed time (hour)

BLE

U

# GPUs

15 epoch��

BLEU

32 24� ����(12 epoch)

(~23.6

%)

64 13.6� 23.5 %

• 15�����

• 32, 64 GPU (RB-H)

• ChainerMN 1.0.0

– OpenMPI 2.1.1, NCCLv2

– Chainer 3.1.0

– python 3.6.1, CUDA 8, cuDNN7,

cupy 2.1.0

�����������������

Reedbush1'*7Python���1. Module<CJ@/:J>?KH�42529IK@

• module avail/E=FKH�9��+IK@,7• module load chainer/2.0.0• module load chainerMN/1.0.0b2 openmpi/1.10.7/intel-chainerMN

• !�� N+(+��0)6� "�13 �

2. ����/��• :J>?KH�42Anaconda9�&• $7�3���%..N� 9�%(*7

3. ���1��/��• Anaconda5��/�8-%�• �#

2019/1/8 77>A<JBI;GDJ;LOML�M

Anaconda�����ChainerMN���

1. CUDA*"+0-�.0%$ module load cuda/8.0.44-cuDNN7

2. MPI*"+0-�Open MPI����

• CUDA Aware�MPI���• GPU Direct�����• MVAPICH2���,0���$ module switch intel-mpi openmpi-gdr/2.1.1/intel

3. Anaconda*"+0-�.0%$ module load anaconda3

2019/1/8 78

4. HOME�/lustre����• � &0%� /lustre�����$ export HOME=/lustre/gi99/i12345

5. Anaconda����create, activate$ conda create -n chainerMN python=3$ source activate chainerMN

6. cupy��/#$0-$ pip install -U cupy --no-cache-dir -vvv

7. cython��/#$0-8. chainer��/#$0-9. chainermn��/#$0-

#'!/(. ,)/ 1321�2

ChainerMN��• ��&!�$#&%"5+6=:';=/@�� �'��

• +72��$ qsub train_imagenet.sh

• +72����$ rbstat

• ���&����$ tail -f log-+72�.reedbush-pbsadmin0(Ctrl+C��)

• +72,(93.�: train_imagenet.sh#!/bin/sh#PBS -q h-regular#PBS -l select=32:mpiprocs=2#PBS -l walltime=04:00:00#PBS -W group_list=gi99

cd $PBS_O_WORKDIRmodule load cuda/8.0.44-cuDNN7 anaconda3module switch intel-mpi openmpi-gdr/2.1.1/intelexport HOME=/lustre/gi99/i12345 source activate chainerMN

mpirun --mca mtl ^mxm --mca coll_hcoll_enable 0 ¥--mca btl_openib_want_cuda_gdr 1 ¥--mca mpi_warn_on_fork 0 ./get_local_rank_ompi ¥python train_imagenet.py … >& log-${PBS_JOBID}

2019/1/8 ,1*<3;)84<)>A?>�? 79

9-=,):=3�Bh-regular

��):=3�Bgi99

��0=/�RB-H 320=/=64GPU

����� B4��

kgnc3<

1. [L10] GPU�6bdamRL?M=Q\VC��S3�%RL?M2'_4�H[>Top500, HPCG, Green500QP_�)RG\DO>

2. [L30~40] D]WN��EI (<RL?MOpenACC_"?I07R��E=GPU�N�+E�*_1�H[>ofnbS<R�FM��p

bdamel`ihm`orpo�p 80

<SkfjR:G\07t•L00: B^YM&�Q<>•L10t JZKO)@]U^A\<>•L20t ��#Q<>•L30t ��9$ �.OG\<>•L40t �89$ �.OG\<>-;Q�,_�.OG\>•L50t �A�$ �.OG\<>�/�<_X>�Lsq��T=5�_�!G\R�G\<>

2019/1/8

������� ��

�!���"��#����������

2019/1/8 ���!� ���!�$&%$�% 81

![HP).pdf · : HNO3 qr qr3 " ! ] = : BC KL- # " ! $ $ ' & % * ) ( ... & KL- rH& ÝÞß Ü idek g > 0?k 0\= ; hi ` J I H I H $ I H $ I H I H I H I H I H I H I H I H $ I H G G G 9 8 3](https://static.fdocuments.nl/doc/165x107/5dd1081bd6be591ccb63e317/hppdf-hno3-qr-qr3-bc-kl-.jpg)

![Cikgu Adura's Blog 2 | Shūrā al-kīmiyā’ | Ringkas dan Mudah...ChemQuest 2012 [SBPtria111-36] Which of the following are isomers of pentane c- —H H c- H H H H H H 1 and 11 1](https://static.fdocuments.nl/doc/165x107/6050baff16b1cf1cd641a3b3/cikgu-aduras-blog-2-shr-al-kmiya-ringkas-dan-mudah-chemquest.jpg)

![files.leprf.rufiles.leprf.ru/tip_proekt/rd-34455130097.pdf · L Z [ e b p Z 9.2. A g Z q _ g b y k h i j h l b \ e _ g b c i h k l h y g g h f m l h d m l h d h \ _ ^ m s _ ] h d](https://static.fdocuments.nl/doc/165x107/5f5b02d81d980d61174fb784/filesleprf-l-z-e-b-p-z-92-a-g-z-q-g-b-y-k-h-i-j-h-l-b-e-g-b-c-i-h-k.jpg)

![Анонсschool-int9.ru/wp-content/uploads/2017/12/7_nomer_2-1.pdf · 3 \ h ^ h i ( d \ _ ^ m d b). I h ^ h [ g f g h ] b f ^ j m ] b f k h h j m ` _ g b y f, i h k l j h _ g g u](https://static.fdocuments.nl/doc/165x107/602e5f7131d73f4ff32c0f56/school-int9ruwp-contentuploads2017127nomer2-1pdf-3-h-h.jpg)